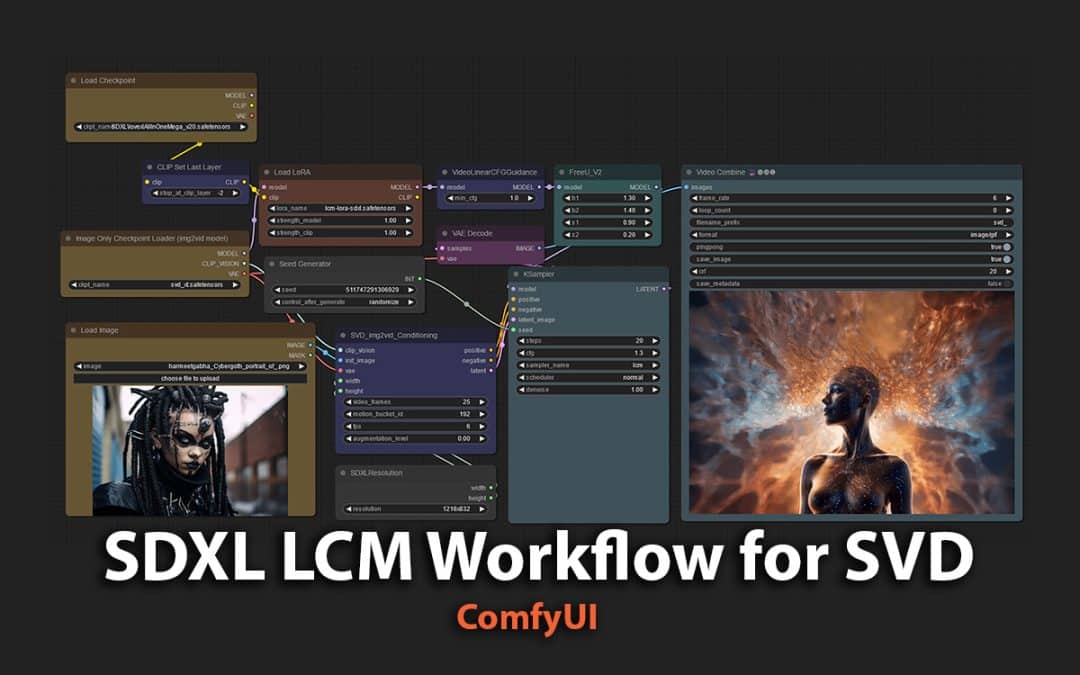

Stable Video Diffusion as I covered in an earlier post about its release (Stable Video Diffusion using ComfyUI) is rapidly taking over the internet but not just that also my workflow. I find myself playing around with various Image-to-Video setups and so far one of my favourite is a modified version from Nuralunk.

The workflow uses SVD + SDXL model combined with LCM LoRA which you can download (Latent Consistency Model (LCM) SDXL and LCM LoRAs) and use it to create animated GIFs or Video outputs.

There some Custom Nodes utilised so if you get an error, just install the Custom Nodes using ComfyUI Manager.

Select the image you want to animate, define the SDXL dimensions you want eg. 1316 x 832px which will be the dimensions for the final animated video. By default, the workflow is setup to create 25 frames and create a 6 frame per second (FPS) GIF. However, you can push to higher FPS, I’ve been able to go up to 24 FPS in some of my experiments.

In my experimentation I set the motion_bucket_id to various values such as 128, 192, 256 (just stuck with 64x multiples), however you can try any values you like.

The Video Combine node, also has a fun feature which is called pingpong which basically creates a looping results by going forward and then back through the frames generated, which gives a nice result. Before I discovered this option I was using external video tool to loop the video but this is such a time saver. It also means you end up with twice as long clip.

Showcase of the Results

I uploaded several images that I had from created and started to experiment with them. Below you will see many results produced as MP4 video and GIF images. Interesting how in some images SVD makes the subject blink.

I hope this inspires you to create your own animated clips or GIFs using this workflow. Don’t forget to share your results via the comments below, I’d love to see what you create. You can always tag me or WWAI_Art X handles.

If you'd like to support our site please consider buying us a Ko-fi, grab a product or subscribe. Need a faster GPU, get access to fastest GPUs for less than $1 per hour with RunPod.io

how are you maintaining such crisp consistency in the video? I used your workflow, but my video melts into a blurry painting. Are there certain parameters and where do we download the model lovexlallinone?

The same settings as in my worklfow. Is your image you are using sharp?

Same question, where to download the model lovexlallinone?Can we use other SDXL model such as sd_xl_base_1.0.safetensors instead?Even use LCM why still need 20 steps in KSampler and why it’s still time consumptions?

You can download it from Civitai https://civitai.com/models/153202/lovexl-all-in-one-mega-update. Note that site can be NSFW so browse with caution.