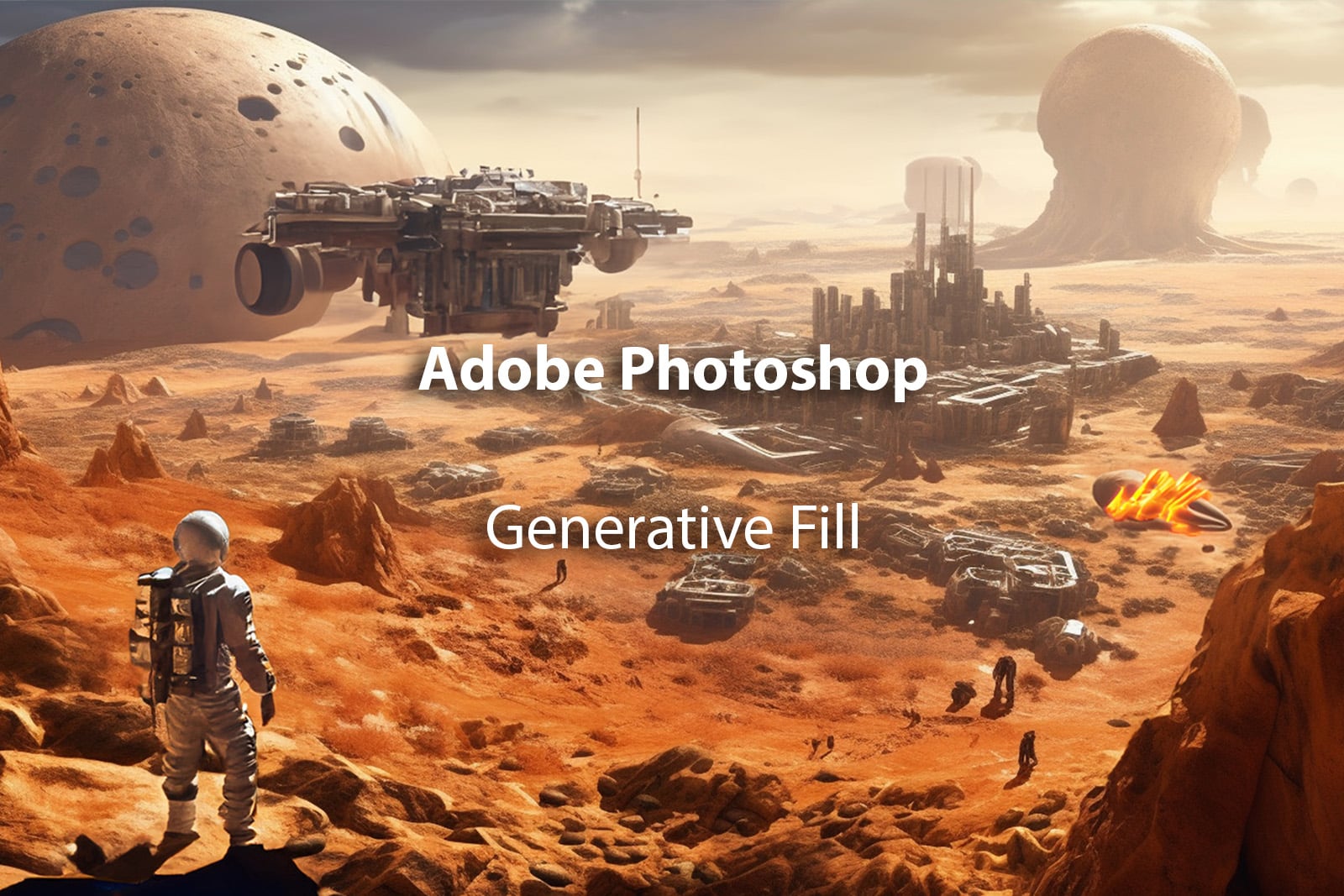

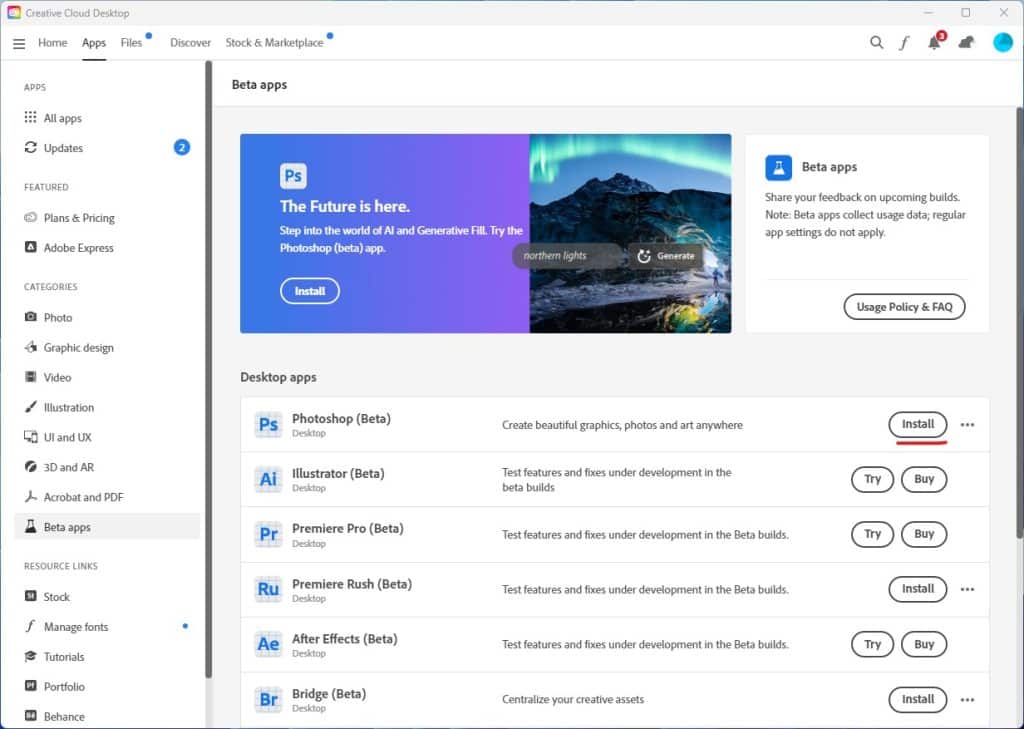

Photoshop Beta version 24.6 was released a few days ago and its taking over like wildfire some of the social media platforms. It is worth all the hype, I thought and decided to install this version.

This version comes with the latest feature of Adobe which combines the power of Photoshop with Firefly generative image creation. Adobe has also published a full tutorial on how to use this feature on their website.

There are a few ways you can use this Generative fill, lets explore them in this post.

Extending an Image

In the AI space this technique is often referred to as Outpainting which means you start with a base image and expand its borders to generate new parts of the image that were never there.

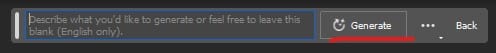

Open Photoshop Beta and using the Crop tool expand the dimension however you like. Now draw a selection using small part of the existing image as shown below. The Generative fill box will appear where you can type a prompt or not.

Don’t type anything just click on Generate and it will generate and extend the existing image based on the contents of existing image.

You will get three options to choose from and you can decide which you like best by cycling through them.

TIP: if you just select the empty area and ask Photoshop to generate it will still do a good job but you may see a slight edge line between the two layers. Hence selecting part of the original helps blend the two.

Here is the expanded image from its original square ration now to a more cinematic crop.

Here are both the original and extended image below.

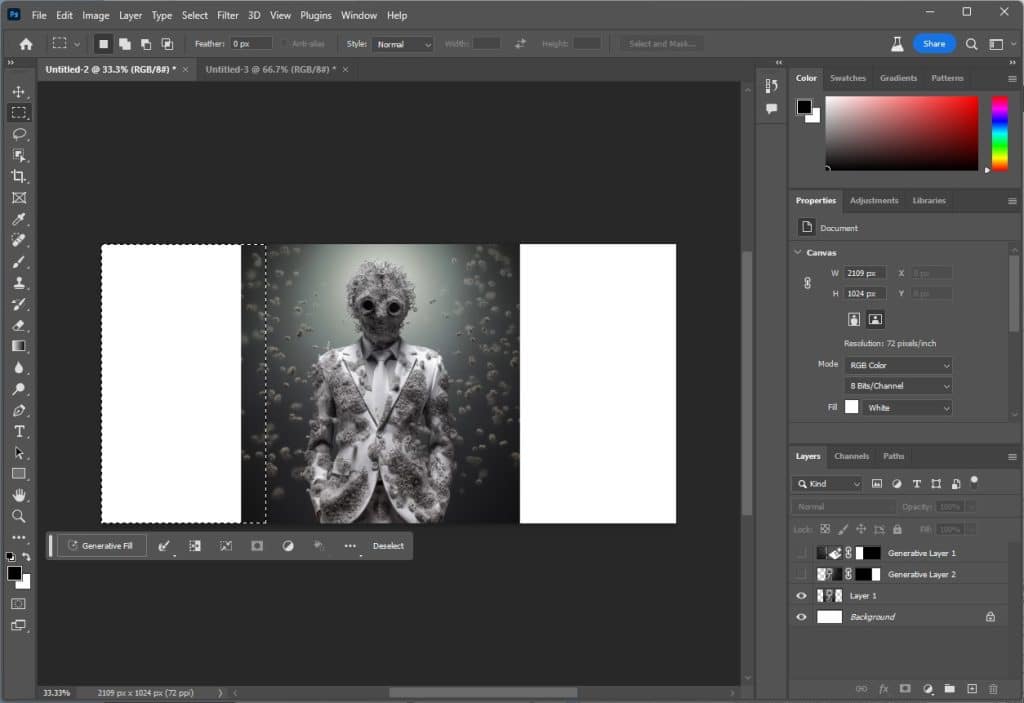

Generate an Object

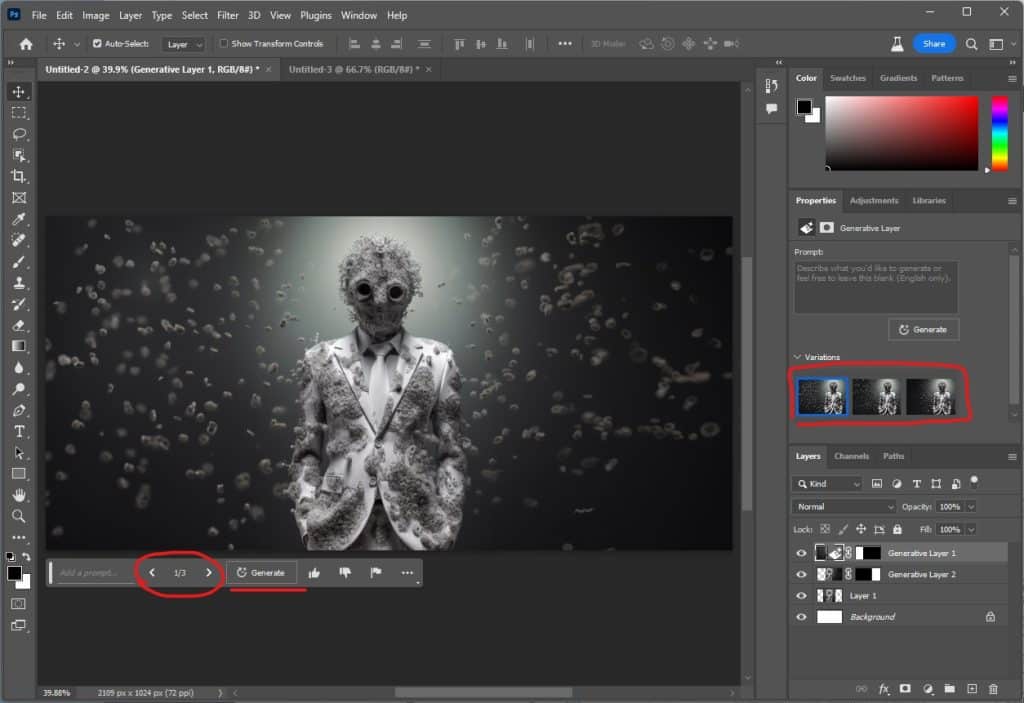

This is often referred to as In-painting in the AI space where you take an image an use text-prompts to generate new parts inside of an image. We start off with the below image and have selected the astronaut as the subject we want to replace.

The prompt is: astronaut in a spacesuit on mars, holding railgun

Three images will be generated and you can simply cycle through them to find the right one that fits your image or click Generate again and 3 additional images will be generated.

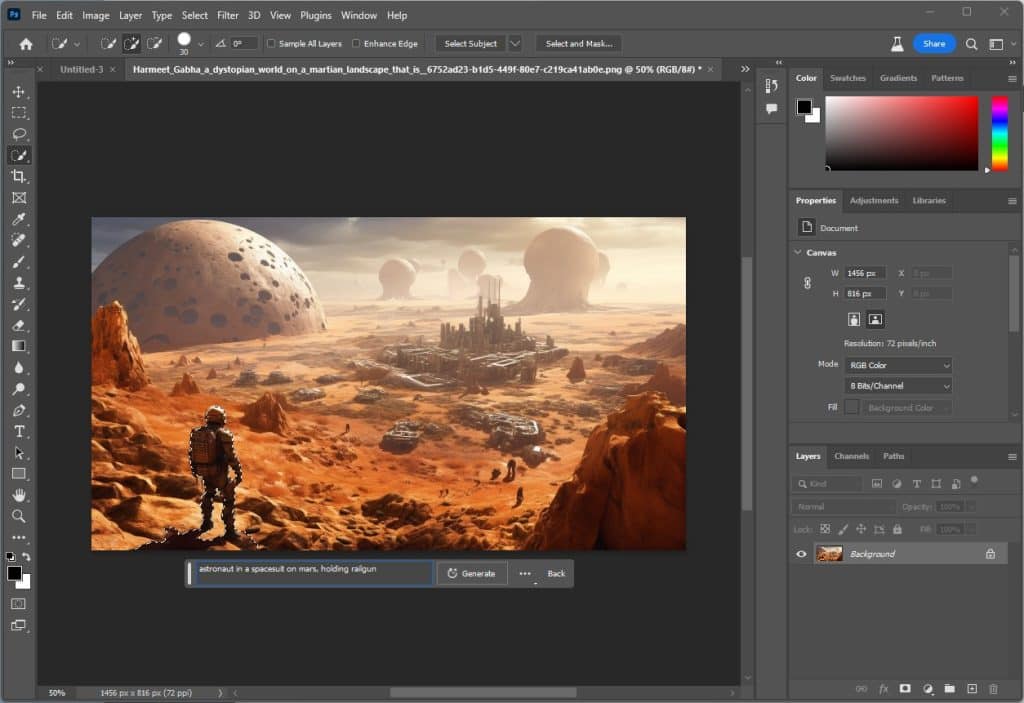

Now let’s add a spaceship like object in the image to make it look like its out of this world.

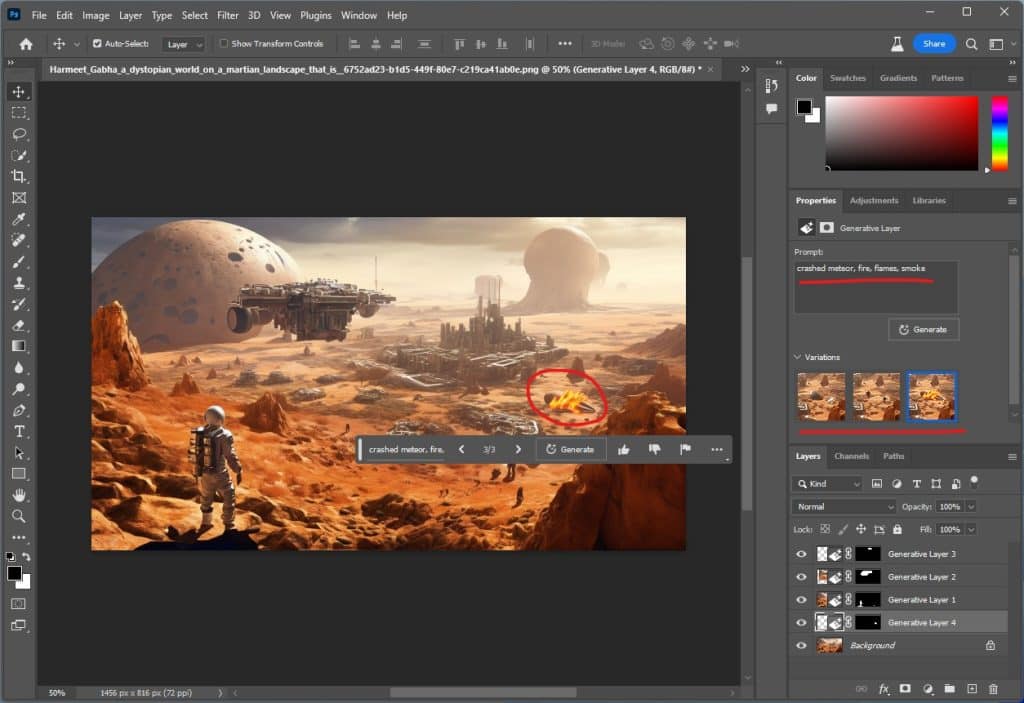

Let’s add a crashed meteor in the landscape. Although I tried several times it did not produce any good results. Finally accepted the below generation that looks like a space saucer that may have crashed.

Final resulting image is below. All and all not bad but when you compare this with Midjourney the quality of the generation is not bad. However, as there is no Midjourney plugin for PS maybe this is something the Midjourney team may pursue.

Preview Video

I also recorded a preview video where I showcase these above features with another starting image generated using Midjourney.

If you'd like to support our site please consider buying us a Ko-fi, grab a product or subscribe. Need a faster GPU, get access to fastest GPUs for less than $1 per hour with RunPod.io