PhotoMaker is one of the new AI tools that many people say is going to replace LoRA training and IPAdapter when it comes to re-creating a face consistently. It does not require any training like a LoRA and can re-generate the reference face with ease, so they say!!

In this post I am exploring how this PhotoMaker works and sharing samples and my findings of its testing. You can access PhotoMaker Repo and follow the instructions to spin up your own instance. However I had all sorts of issues when installing this on my Windows 11 PC. I later discovered through its Issues list that another Fork of this Repo was created for Windows users, which when followed works fine first time around.

Installation

Installing this on your PC is quite straight forward as long as you are using this repo. There are few main things to install which are listed in Installation section of the repo.

- Install Python

- Install Git

- Install Visual Studio Re-distributable

- Run the commands listed (grab a tea or coffee while this happens)

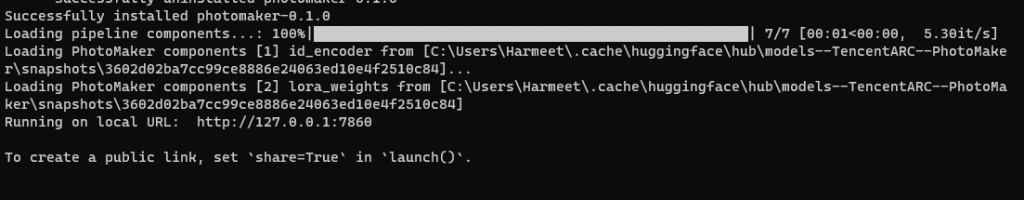

To run simply execute the GUI.bat file, which will download the models at first execution (where tea or coffee helps while you wait). Subsequent startup executions are faster!!

Click on the local URL: http://127.0.0.1:7860 (ctrl+click) to launch the Gradio User Interface.

Running PhotoMaker

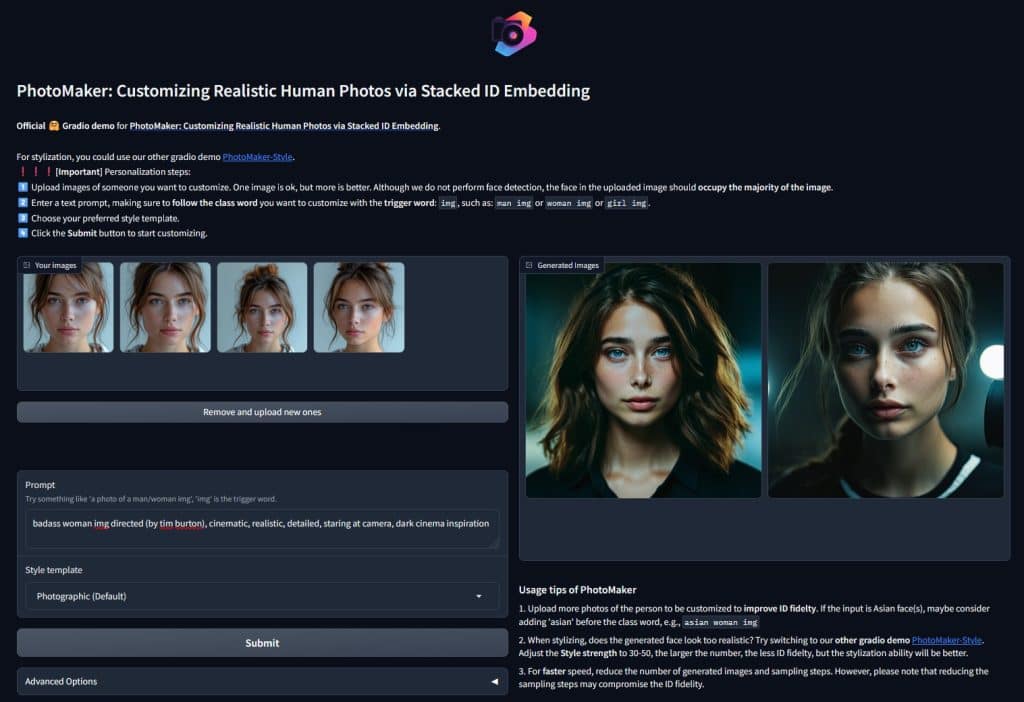

In order to start using it you need to upload a few images of the sample face, ideally the image uploaded should be mostly of the face. In my testing I used 4 cropped images of a face (AI Generated in Midjourney) as reference images.

Once uploaded enter the prompt, make sure you use the trigger/class word “img” in your prompt. eg. man img or woman img etc. Then you can see any pre-defined style templates that are provided in the Gradio app, they simply enhance your prompt that’s all to stylize it.

Generation takes sometime based on your GPU, in my case it takes about 30 seconds to run on my RTX4080 16GB PC.

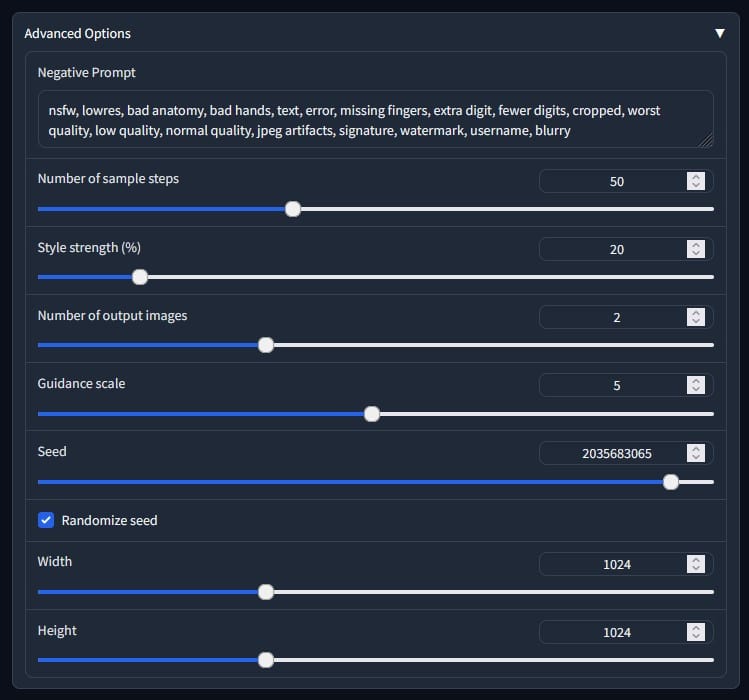

Advanced options let you customise a few of the generation parameters. I left most of them to default except size which I change to generate portrait images.

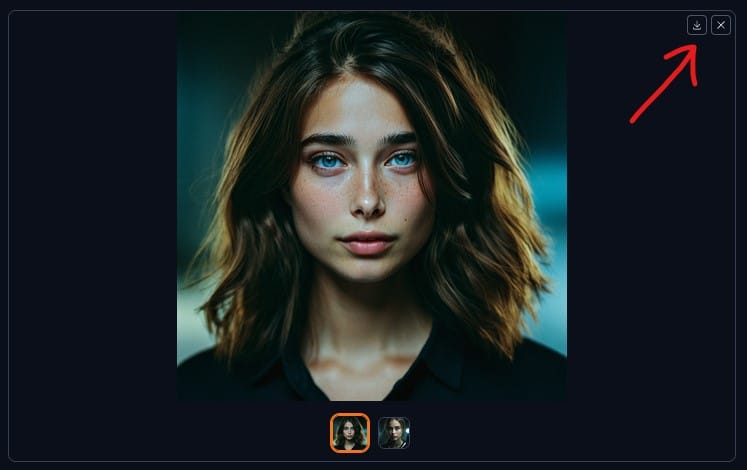

Once your generations are done you need to click on the download icon to save them because there is no output directory where they are saved by default. Otherwise you will lose the past image if you run again.

You can also checkout my full walk-through video on this below.

Assessment of the Results

Now the keen eye comes in to compare the original reference vs the generated image. What do you see and think?

Having compared several examples my findings are that the generations are consistent with the interpretation of the face, however they are not exactly the same face being generated. It has some resemblance to the original reference face but its not exact copy.

I tried many different faces to get to this conclusion even real human faces and mine. The results did not regenerate the same face, I find that having a trained LoRA is still a better solution because there is a lot more information stored in a LoRA and therefore its more close to original training images.

This no training approach might be a good concept but still needs work before it can produce canny faces that are a clone of the reference. However, as with anything new the first cut is never the last so hopefully future versions of this way to regenerating a face will improve the results.

If you'd like to support our site please consider buying us a Ko-fi, grab a product or subscribe. Need a faster GPU, get access to fastest GPUs for less than $1 per hour with RunPod.io