Paperspace is a great alternative to Google Colab and I have switched over to this completely while cancelling my Colab subscription. However you might feel disadvantaged because you loose the Google Drive integration which was possible via Colab Notebooks and is not available in Paperspace.

Paperspace does provide you the ability to upload your model directly to the virtual machine you are running but the upload is painfully slow. However if you want to use standard Stable Diffusion models that have publicly hosted storage which can be mounted on your virtual machine and therefore eliminates the need to upload the model.

However the problem remains when you want to use custom fine tuned model CKPT files of Stable Diffusion that you want to run in your environment. Well the solution is quite easy to implement and very fast to upload!! (upload 2GB in under 30 seconds)

There are three key steps:

- Upload your desired CKPT file to your Google Drive (yes that’s right)

- Share the file via the Link

- Add some custom code to your Paperspace Notebook and run to download it your models folder

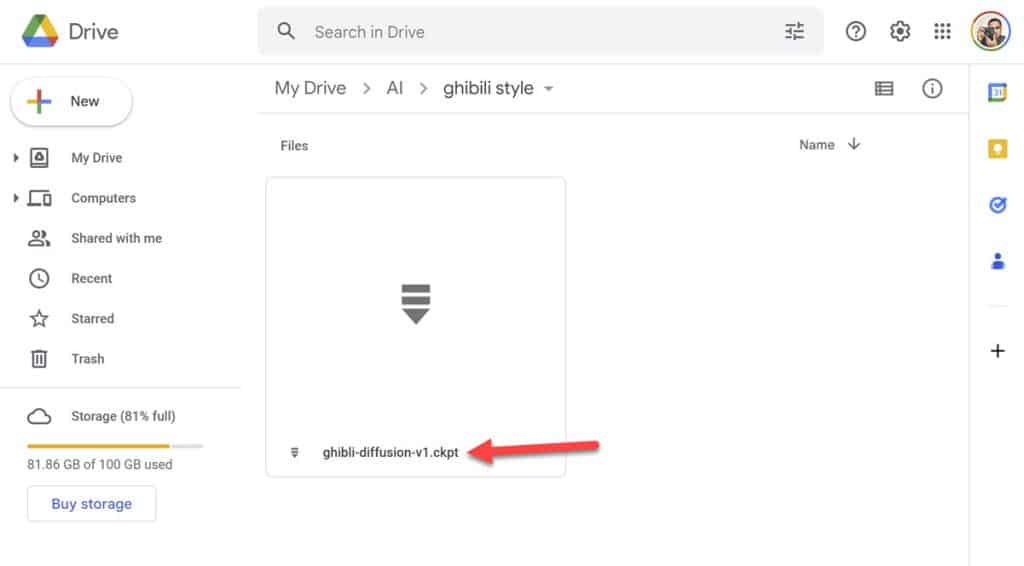

Upload the CKPT

Download the model from huggingface or github repo, where ever its hosted and save it on your local computer. Upload it to Google Drive in your desired folder.

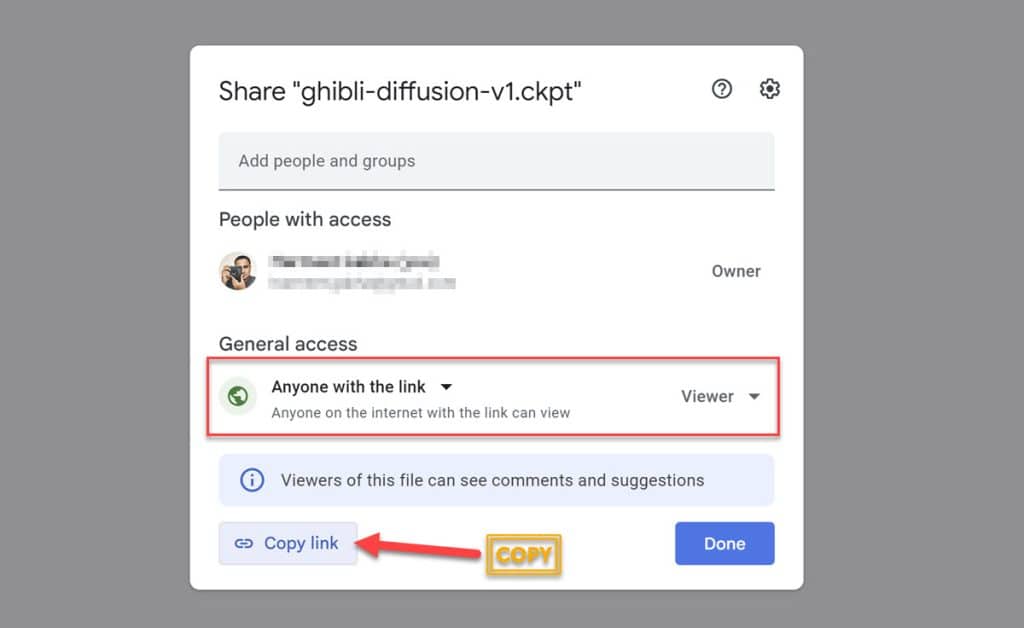

Share the CKPT File

Now, right click on the file and make is shareable via link. General access should be set to “Anyone with the link” (of course you will be the only one who will know the link – don’t share with anyone). Copy link and save the link somewhere temporarily because you will need it later. The link you get should look something like this: https://drive.google.com/file/d/1rPu7NizmGnUew5rd8yZx0jeD3X5LDaqX/view?usp=sharing

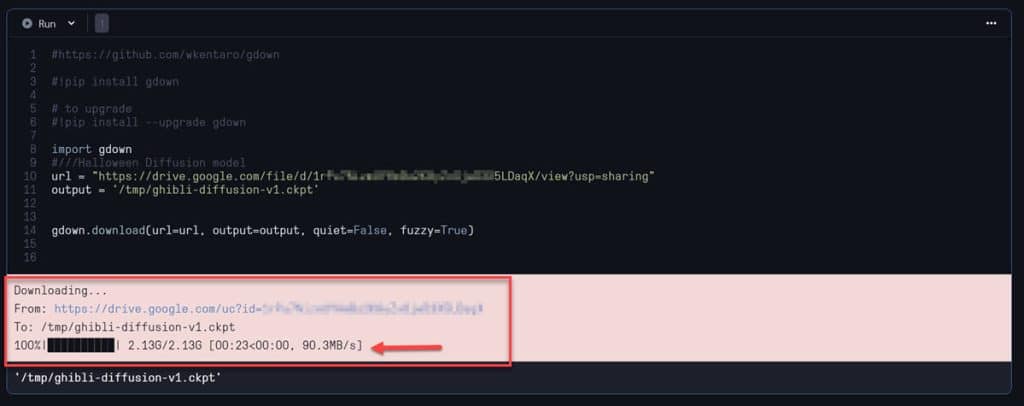

Paperspace Setup and Download

Open your Notebook in Paperspace (.IPYNB file) and create a new code block by clicking on <Add Code>. Paste the following code in the block. This code uses a library called Gdown which allows you to fetch files hosted on Google Drive into Paperspace environment.

- url – should be the URL you copied earlier from Google drive

- output – the path of where you want to store the .CKPT file eg. /notebooks/stable-diffusion-webui/models. This is crucial because if your application cannot see/access the .CKPT file you won’t be able to use it.

#https://github.com/wkentaro/gdown

!pip install gdown

# to upgrade

!pip install --upgrade gdown

import gdown

#Ghibili Diffusion model

url = "https://drive.google.com/file/d/1rPu7NizmGnUew5rd8yZx0jeD3X5LDaqX/view?usp=sharing"

output = '/notebooks/stable-diffusion-webui/models/ghibili-diffusion.ckpt'

gdown.download(url=url, output=output, quiet=False, fuzzy=True)Click Run to execute the code, which will install gdown or update it if already installed. Then it simply will fetch the URL (from url specified) and download the specified file to the output location. You can see in the below example that it was able to fetch 2.13Gb from Google Drive in less than 30 seconds.

That is it!!

For any other model CKPT files you want to you can copy/paste the last 3 lines of the code and replace the url and output values as needed. In my case I save these models to /tmp folder which is purged when you shutdown the machine but as it takes next to no time to download I prefer this, rather than downloading them in the /storage folder which is metered in Paperspace and you will be charged excess usage depending upon your plan.

Hope you found this post useful and if you have any questions or comments please leave them below.

Update 28 November 2022

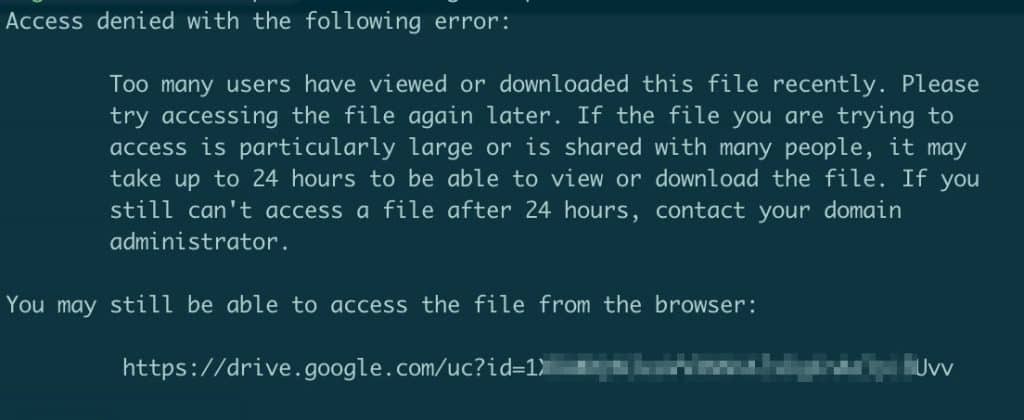

Found an issue when using gdown with very large files. Google’s alert about virus scan of large files can prevent the above to work and you will get an error:

Access denied with the following error: Too many users have viewed or downloaded this file recently. Please try accessing the file again later. If the file you are trying to access is particularly large or is shared with many people, it may take up to 24 hours to be able to view or download the file. If you still can’t access a file after 24 hours, contact your domain administrator.

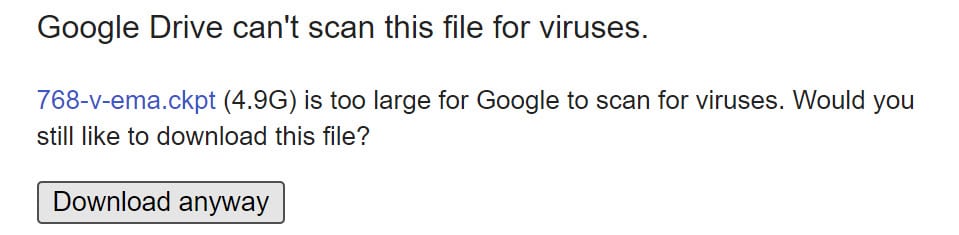

When you click on the link in the error message you get a page from Google Drive indicating “Google Drive can’t scan this file for viruses.”. Because of the warning message gdown cannot not successfully download this large file while you are using the above mentioned method of URL and Output directory. The solution is quite easy though and you could replace above with below technique.

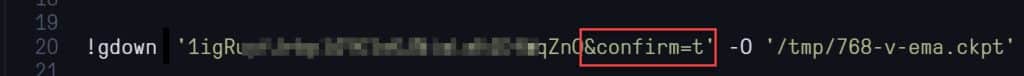

!gdown '<fileID>&confirm=t' -O '/<output folder>/<output filename>'fileID is the alphnumeric string value in the URL after ID=, make sure you add the &confirm=t and note the ! before gdown (as want to run it as command line). Use the -O to specify the output location of the file.

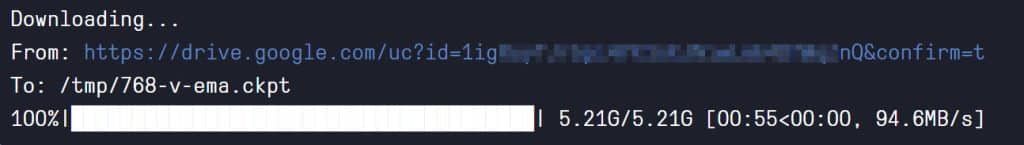

Once you run this the file will be successfully downloaded to the specified output location.

If you'd like to support our site please consider buying us a Ko-fi, grab a product or subscribe. Need a faster GPU, get access to fastest GPUs for less than $1 per hour with RunPod.io