Woke up to the news that a new Stable Diffusion release called “Stable Cascade” has come out over night. So naturally this is creating a lot of buzz on various socials but there aren’t many resources out there to start to demo or play with it just yet. Give it a week and there will be many things available on Automatic1111 and ComfyUI.

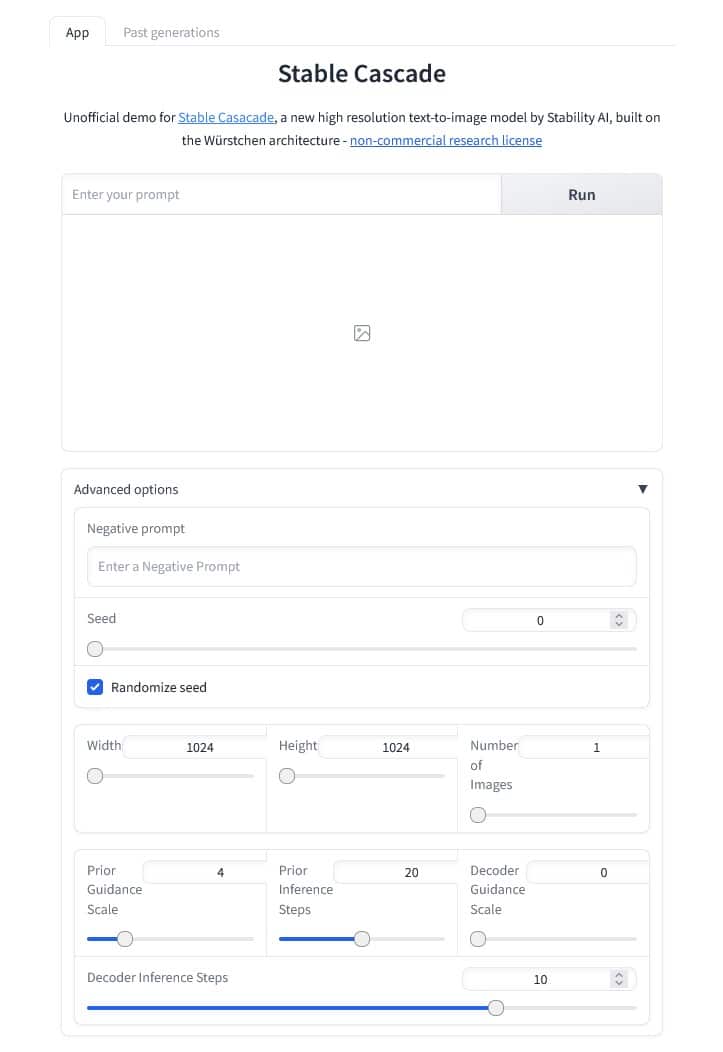

However as you are curious for a Demo, here is where you can go to get it. Just head over to this HuggingFace page. Enter your prompt and click on Run, wait a few seconds for GPU to be assigned and you have the results.

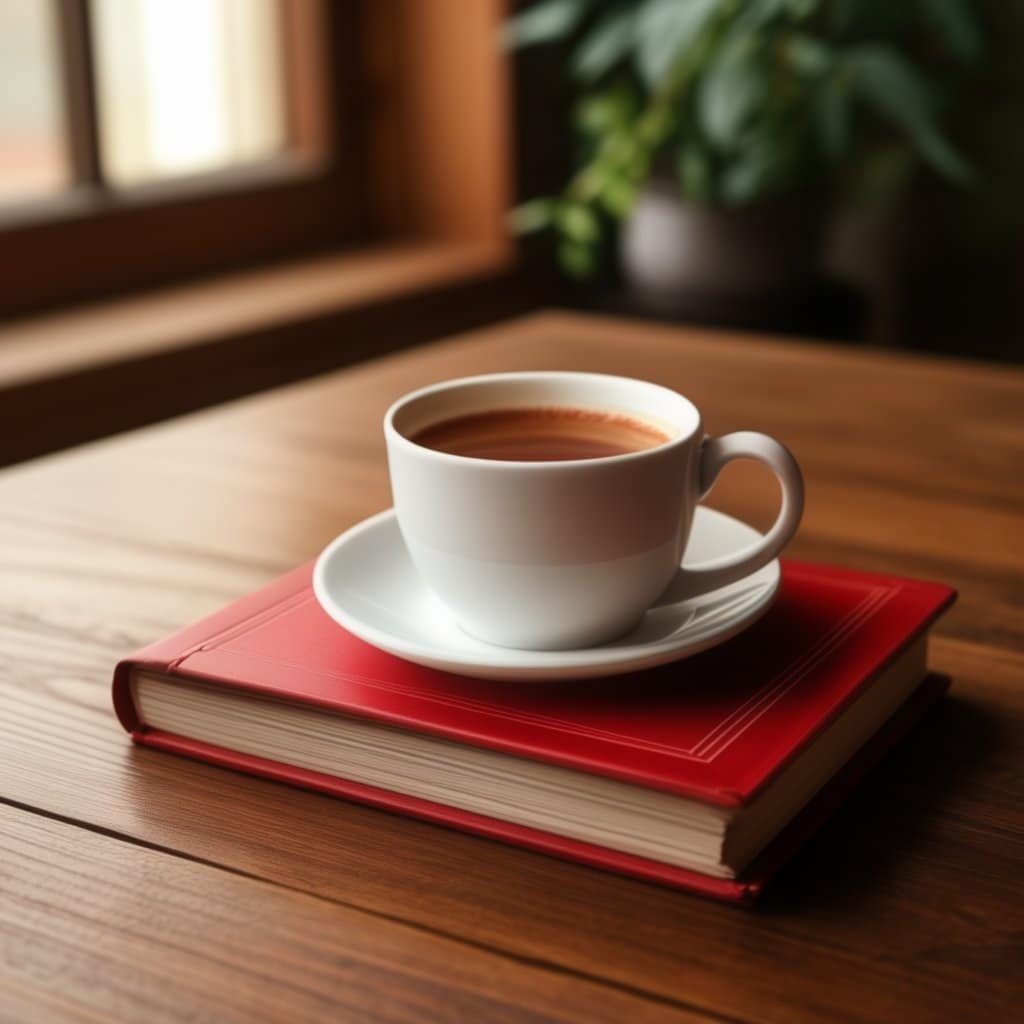

The result looks pretty darn good as well, how well the image is composed and the text is correctly paced. And as post this article on such a special day of course my prompt has to match.

The interface is quite easy to follow but also has some advanced settings to fine tune. I only played with the width and height which goes from 1024 to 1536 so you can create 1:1 ratio or 2:3 or 3:2 ratios for your images.

The results are very nice so far and prompt understanding is more like natural language but old prompts work as well.

About Stable Cascade

This model is built upon the Würstchen architecture and its main difference to other models like Stable Diffusion is that it is working at a much smaller latent space. Why is this important? The smaller the latent space, the faster you can run inference and the cheaper the training becomes. How small is the latent space? Stable Diffusion uses a compression factor of 8, resulting in a 1024×1024 image being encoded to 128×128. Stable Cascade achieves a compression factor of 42, meaning that it is possible to encode a 1024×1024 image to 24×24, while maintaining crisp reconstructions. The text-conditional model is then trained in the highly compressed latent space. Previous versions of this architecture, achieved a 16x cost reduction over Stable Diffusion 1.5.

Therefore, this kind of model is well suited for usages where efficiency is important. Furthermore, all known extensions like finetuning, LoRA, ControlNet, IP-Adapter, LCM etc. are possible with this method as well.

Samples

Here are a few samples image I created using the huggingface page.

Conclusion

Results are looking promising and cleaner that SDXL, composition and text rendering is definitely better along with natural language understanding when prompting. I look forward to doing more tests and experiments as the various UIs like Automatic1111 and ComfyUI start to support this new model, it won’t be long!!

If you'd like to support our site please consider buying us a Ko-fi, grab a product or subscribe. Need a faster GPU, get access to fastest GPUs for less than $1 per hour with RunPod.io